The Conversion Rate Optimization landscape is littered with misinformation about what you should split-test to grow your Shopify store.

The problem is that there are so few case studies publicly available (for obvious reasons), that the same advice is re-hashed, recycled and repeated across dozens of “expert” blogs.

But here’s some advice that’s tried and true:

Conversion Rate Optimization Blunder #1 – Not testing at all/properly!

Not running split-tests on your Shopify store is a mortal sin.

Your business will stay stuck if you’re not growing it with improvements in your conversion rate. Worse still is testing using the before-and-after method. This crude method doesn’t use the split-testing software.

You make a change then watch to see what happens.

This method sucks because, if like most e-commerce stores your orders fluctuate week-to-week, you may make the wrong decision and keep a losing variation that you thought was a winner.

This is bad news for your business!

Conversion Rate Optimization Blunder #2 – Blindly following ‘best-practice’

The truth is that unless you identify the specific issues YOUR visitors are having with YOUR website, then your split-tests will often yield disappointing results.

But when you uncover your visitor’s sales objections, points of confusion and frustration you tap into a goldmine of information to base your split-tests on.

And when you address these issues, that’s when you get off-the-chart results.

Not only that, but discovering what persuaded your customers to buy allows you to emphasize this in your sales copy to tip the balance for visitors that are undecided.

Conversion Rate Optimization Blunder #3 – Obsessing over what your competitors and the big players are doing

“Don’t focus on the competition, they’ll never give you money.” – Jeff Bezos

Don’t get me wrong, it’s good to keep one eye on what your competitors are up to. It’s also a good idea to look at what key players in other markets are doing.

You might be able to get some inspiration for a split-test of your own. And often, ideas from a completely unrelated industry can translate into big wins for you.

But where people go wrong is that they assume, just because your evil nemesis competitor has changed their product page layout, that it must be working.

The chances are your competitors haven’t got a clue what they’re doing…

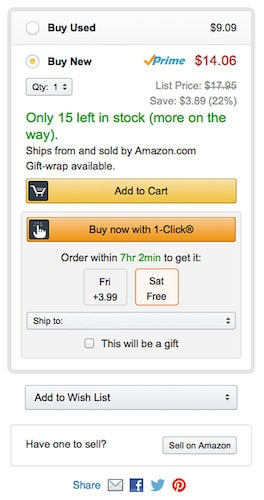

On top of that, if you’re convinced everything Amazon does works, you’re in for a fall. The problem is it’s difficult to tell whether you’re seeing a losing test page or not.

The answer is to test everything for yourself.

Don’t make changes just because you’ve seen Amazon do something.

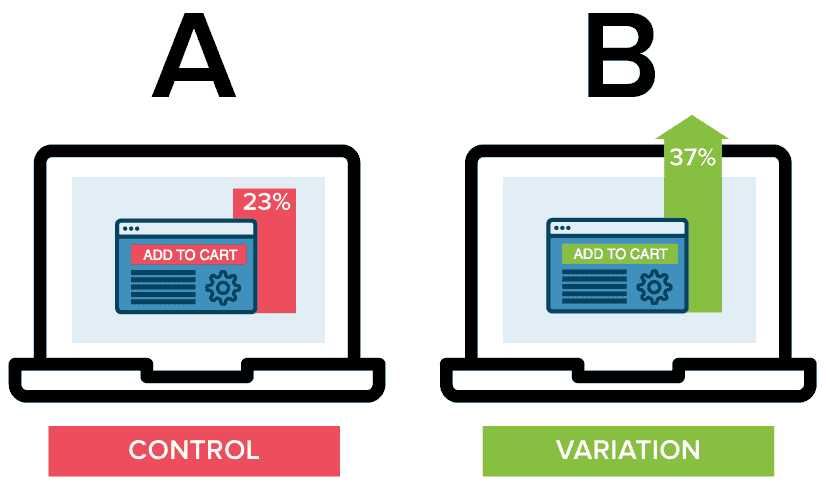

Here’s a split-test run by Amazon:

Control:  But if you were bucketed into the test variation, this is what it looked like:

But if you were bucketed into the test variation, this is what it looked like:

You could have rushed out and changed your button/call-to-action area thinking that this was the new champion. In fact, this was a losing test and Amazon reverted back to their long-standing design.

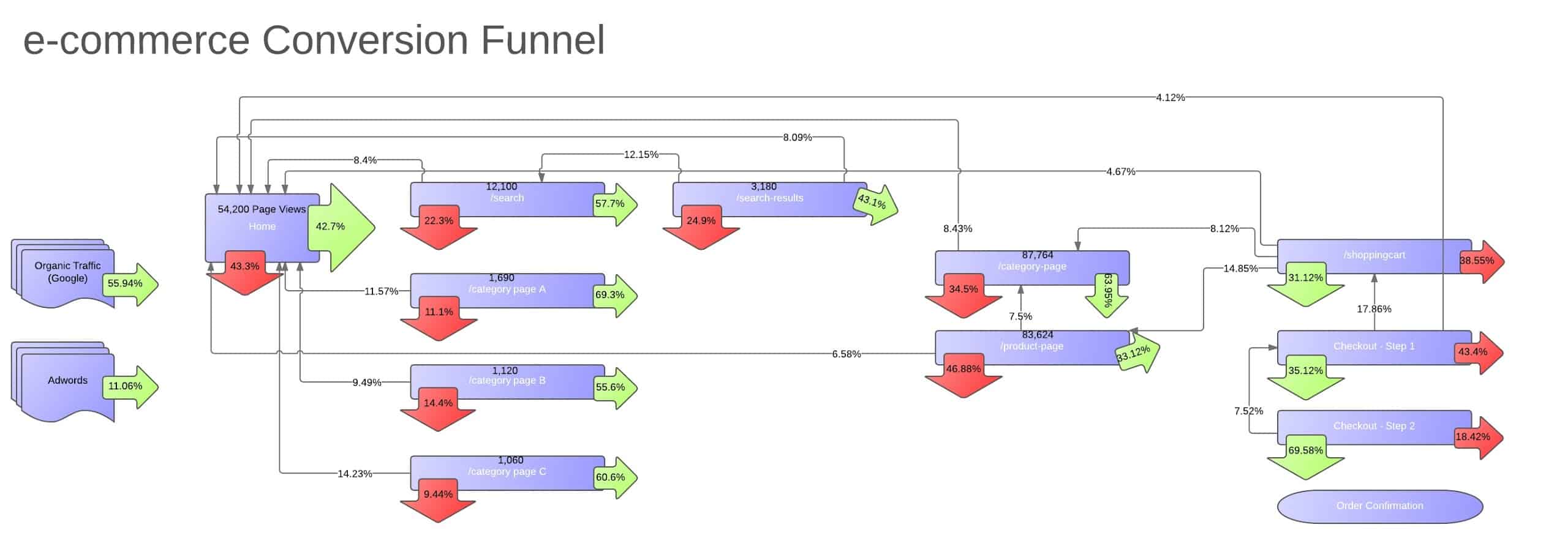

CRO Blunder #4 – Optimizing the wrong pages

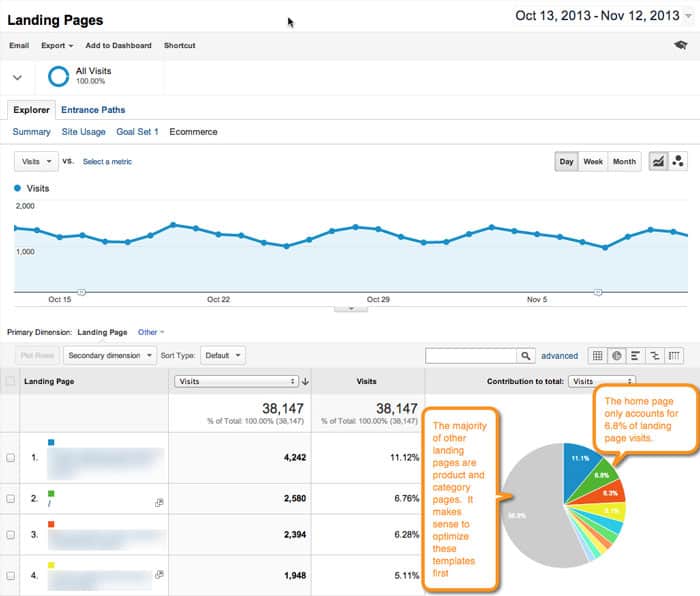

A typical e-commerce funnel diagram here showing the leaks (biggest testing opportunities i.e. the right pages to optimize) I recently worked with a client that insisted on optimizing their home page as their first split-test. They were highly emotionally charged about certain aspects of the design that bugged them (there was nothing to indicate that their visitors didn’t like the design). We made some quick changes and ran the test to prove the point that this was the wrong place to start.

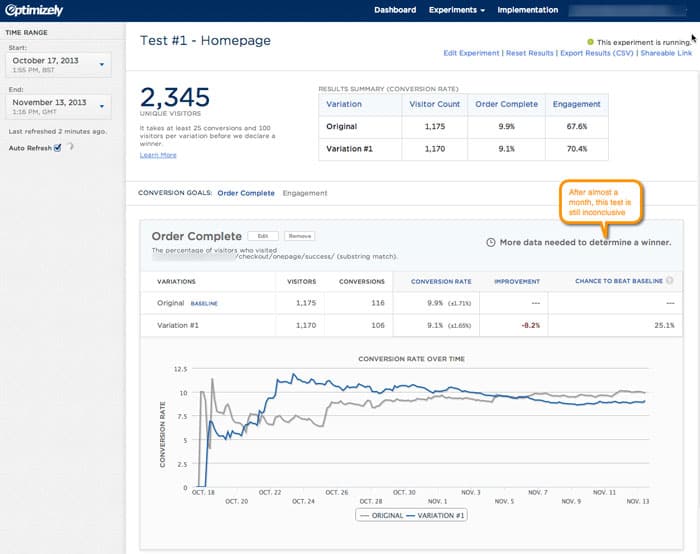

A typical e-commerce funnel diagram here showing the leaks (biggest testing opportunities i.e. the right pages to optimize) I recently worked with a client that insisted on optimizing their home page as their first split-test. They were highly emotionally charged about certain aspects of the design that bugged them (there was nothing to indicate that their visitors didn’t like the design). We made some quick changes and ran the test to prove the point that this was the wrong place to start.  Here are the test results:

Here are the test results:  After almost a month this test is still inconclusive. Which leads me to the next blunder…

After almost a month this test is still inconclusive. Which leads me to the next blunder…

CRO Blunder #5 – Testing small changes

If you’re messing around testing changes like the color of your ‘Add to Cart’ button, you’re likely to achieve very little.  Test big bold changes. There are two distinct benefits to this: i) You’ll get the results far quicker (I’ll spare you the math) ii) You’re more likely to significantly grow your business But there is a catch, your tests need to have a solid hypothesis behind them. If you’re basing them on shoot-from-the-hip guesswork, you may or may not be lucky. If your ideas are backed by visitor intelligence and either answer sales objections or fix a usability issue (or both), the odds are stacked in your favor.

Test big bold changes. There are two distinct benefits to this: i) You’ll get the results far quicker (I’ll spare you the math) ii) You’re more likely to significantly grow your business But there is a catch, your tests need to have a solid hypothesis behind them. If you’re basing them on shoot-from-the-hip guesswork, you may or may not be lucky. If your ideas are backed by visitor intelligence and either answer sales objections or fix a usability issue (or both), the odds are stacked in your favor.

CRO Blunder #6 – Testing too many variables

If you’ve got low traffic (less than 10,000 visitors per month) then running too many test variations at once will mean your tests take months to reach a conclusion. You’ll grow frustrated and lose momentum for your split-testing. With low traffic, it’s best to run a simple A/B test (control and challenger) rather than an A/B/C or A/B/C/D test. The more variations you run, the more your traffic is diluted between the variations and the slower your results. What’s more, you definitely don’t want to be running multivariate tests. We’ve run dozens of multivariate tests and every time you add up the increases for each variation, the total increase never stacks up to be the same as what the multivariate test shows. Because of this, it’s better to run A/B tests over multivariate tests.

CRO #7 – Tracking the wrong metrics

The only metric that matters is money-in-the-bank. Period! Judging the performance of your split-test on bounce rate, engagement, click-throughs or the number of people clicking a button is foolhardy. Meaningless metrics like these might make you feel better about whether users like your page but making the sale is what ultimately counts.

The main metrics to track are:

- Conversion Rate

- Average Order Value

- Profit-per-Visitor

- Lifetime customer value

Be careful not to focus on improving your AOV to the detriment of your Conversion Rate.

There are a bunch of so-called upsell apps in the Shopify app store but the truth is that many mainly only cross-sell.

The fact that they don’t understand the difference between cross-selling and upselling should set off alarm bells.

We’ve tested almost all the Shopify “upsell apps” and they’re all effective at increasing your AOV by getting people to buy more items.

But the problem is that it’s at the expense of your conversion rate and lifetime customer value.

This is because they mostly rely on pop-ups that push the cross-sell offers in front of the user. This detracts from the user experience and in turn, causes a drop in conversion rate… No Bueno.

And even if your conversion rate doesn’t take a nosedive, your lifetime customer value often will.

This is because your customers may have persevered and made it all the way through your checkout but the experience was tainted by the irritation of your cross-sell pop-ups.

Uplift Hero Saves the Day

After trying every Shopify app made for “upselling”, we decided to create an app of our own built on all our own testing called UpliftHero.

Our app helps cross-sell without losing your customer’s loyalty by displaying the cross-sells immediately below the Add-to-Cart button instead of in an annoying pop-up. It’s designed to still get noticed but is totally non-invasive.

UpliftHero helps improve your AOV without detracting from the user experience or destroying conversion rates as others do.

Want to Shortcut Your Testing?

The Shoptimized™ Theme has 30 conversion-boosting features based on hundreds of split-test results. That’s why it’s the best converting Shopify theme.